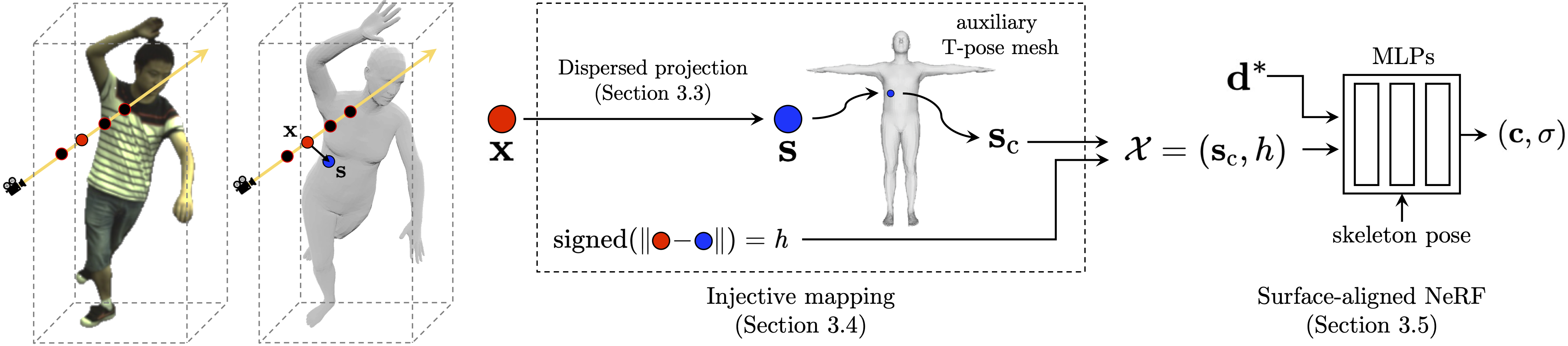

Overview

Given a query point \( \mathbf{x} \in \mathbb{R}^3 \), we use the proposed dispersed projection to project it onto a point \( \mathbf{s} \in \mathbb{R}^3 \) on the mesh surface to obtain a surface-aligned representation \( \mathcal{X} \). The representation \( \mathcal{X} \) and the view direction \( \mathbf{d}^* \) are then input into the NeRF to compute the color \( \mathbf{c} \) and density \( \sigma \) of the query point \( \mathbf{x} \).